Scaling Real-time Telemetry to 10M+ Concurrent Streams

How we architected a fault-tolerant ingestion pipeline using Rust, Kafka, and ScyllaDB to handle petabytes of high-frequency patient data without dropping a single heartbeat.

In predictive medicine, data freshness is not a luxury—it is a patient safety requirement. A 5-minute delay in processing a telemetry packet could mean missing the early warning signs of a cardiac event.

When we set out to build the Unified Health Intelligence Platform (UHIP), our challenge was clear: design a system capable of ingesting 10 million concurrent sensor streams (ECG, SpO2, Accelerometry) with end-to-end latency under 100ms.

This post details the architecture that made this possible, moving from our initial Python prototype to a high-performance Rust + ScyllaDB production cluster.

The Ingestion Layer: Rust at the Edge

Our initial prototype used a standard Python FastAPI service. While excellent for development velocity, it hit a bottleneck at around 5k concurrent connections per node due to the Global Interpreter Lock (GIL) and garbage collection pauses.

We rewrote the edge ingestion service in Rust using the Tokio runtime. The results were immediate:

- Throughput: Increased from 5k to 150k events/sec per node.

- Memory Footprint: Reduced by 90% (from 1.2GB to 120MB).

- Tail Latency (p99): Stabilized at <5ms, eliminating GC spikes.

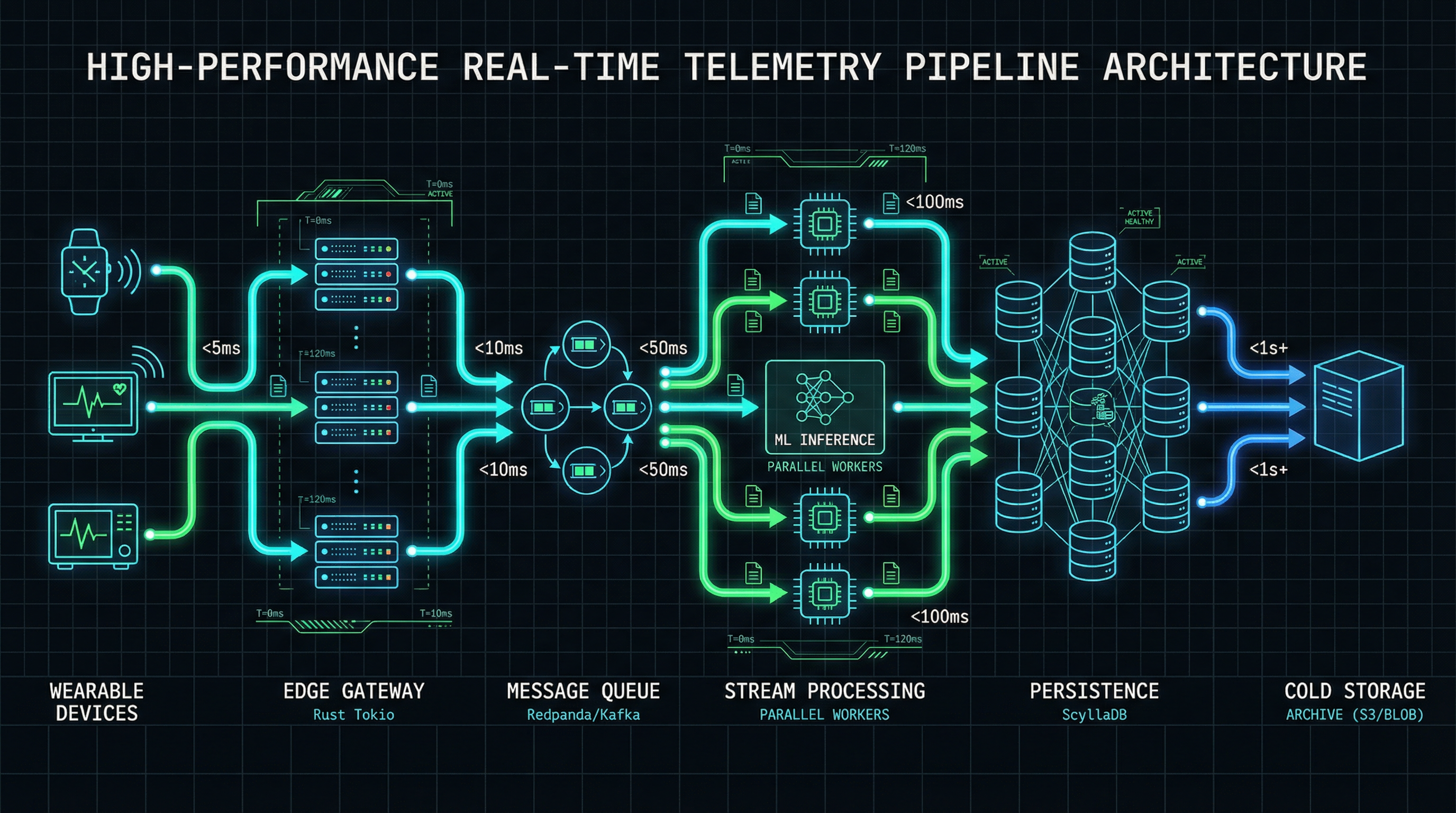

Architecture Diagram: The Telemetry Pipeline

Figure 1: Data flows from wearable devices via MQTT/WebSockets to our Rust Edge Gateway, is buffered in Redpanda (Kafka-compatible), and processed by stream workers before persisting to ScyllaDB.

Persistence: Why ScyllaDB?

We needed a database that could handle massive write throughput (write-heavy workload) with predictable low latency. We evaluated PostgreSQL (TimescaleDB) and Cassandra before settling on ScyllaDB.

ScyllaDB's shard-per-core architecture allows us to saturate the NVMe drives on our storage nodes. We use a Time-Window Compaction Strategy (TWCS) for our raw telemetry tables, as data is immutable and expires after 30 days (moved to cold storage).

Real-time Inference

Ingestion is only half the battle. To provide "Intelligence," we need to run inference on this data stream. We use a Stream Processing Architecture where the Inference Engine subscribes to the Kafka topics directly.

This decoupling ensures that a slow model doesn't backpressure the ingestion layer. If the inference cluster falls behind, the data remains safely buffered in Redpanda.

Security & Compliance

Handling PHI (Protected Health Information) at this scale requires rigorous security. All data is encrypted in transit (mTLS) and at rest (AES-256). Furthermore, we strip PII (Personally Identifiable Information) at the edge, replacing it with ephemeral session IDs that can only be re-linked to a patient record within the secure enclave of the Intelligence Core.

Join the Engineering Team

We are looking for Systems Engineers, Rustaceans, and ML Ops specialists who want to build software that saves lives.